I built an AI-gen video detection model and browser extension in a month

Have you ever wondered while browsing the internet whether the video or image you’re viewing is real or AI-generated? People say, “Seeing is believing,” but that’s less true in the AI era. Nowadays, generating photorealistic videos with audio is easier and cheaper than ever.

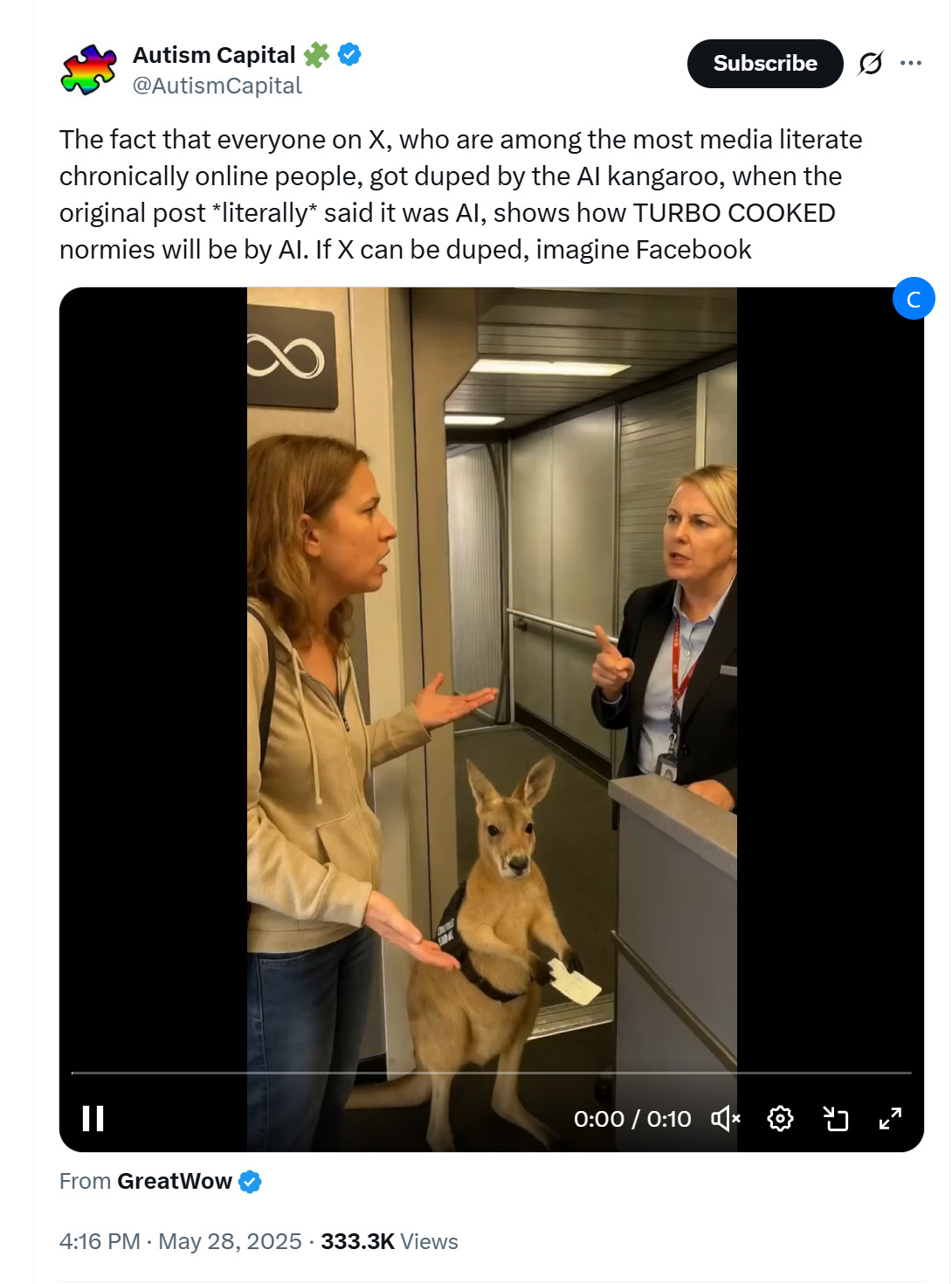

If you’ve followed trends on X, you may have noticed many users liked and reposted a video of a kangaroo being denied boarding on an airplane as an emotional support animal, ticket in paw. As adorable as it was, the video was AI-generated. The ticket’s text is gibberish. I call it “AI fonts.” I’m no linguistics expert, but the verbal exchange also felt off.

I’ve faced the same issue. While browsing X, I’ve retweeted content, only to later realize it was AI-generated, which was embarrassing. I wished for an easy-to-use tool to distinguish AI-generated content from real content. I tested several online tools claiming to detect AI-generated content, but none worked as expected. So, I spent the past month training a model and building a browser extension focused on detecting AI-generated videos on X. I named it CakeLens, inspired by the viral “Is it a cake?” videos. Instead of identifying cakes, it detects AI-generated content. I chose the name because I wanted it to be as easy as “a piece of cake” to use.

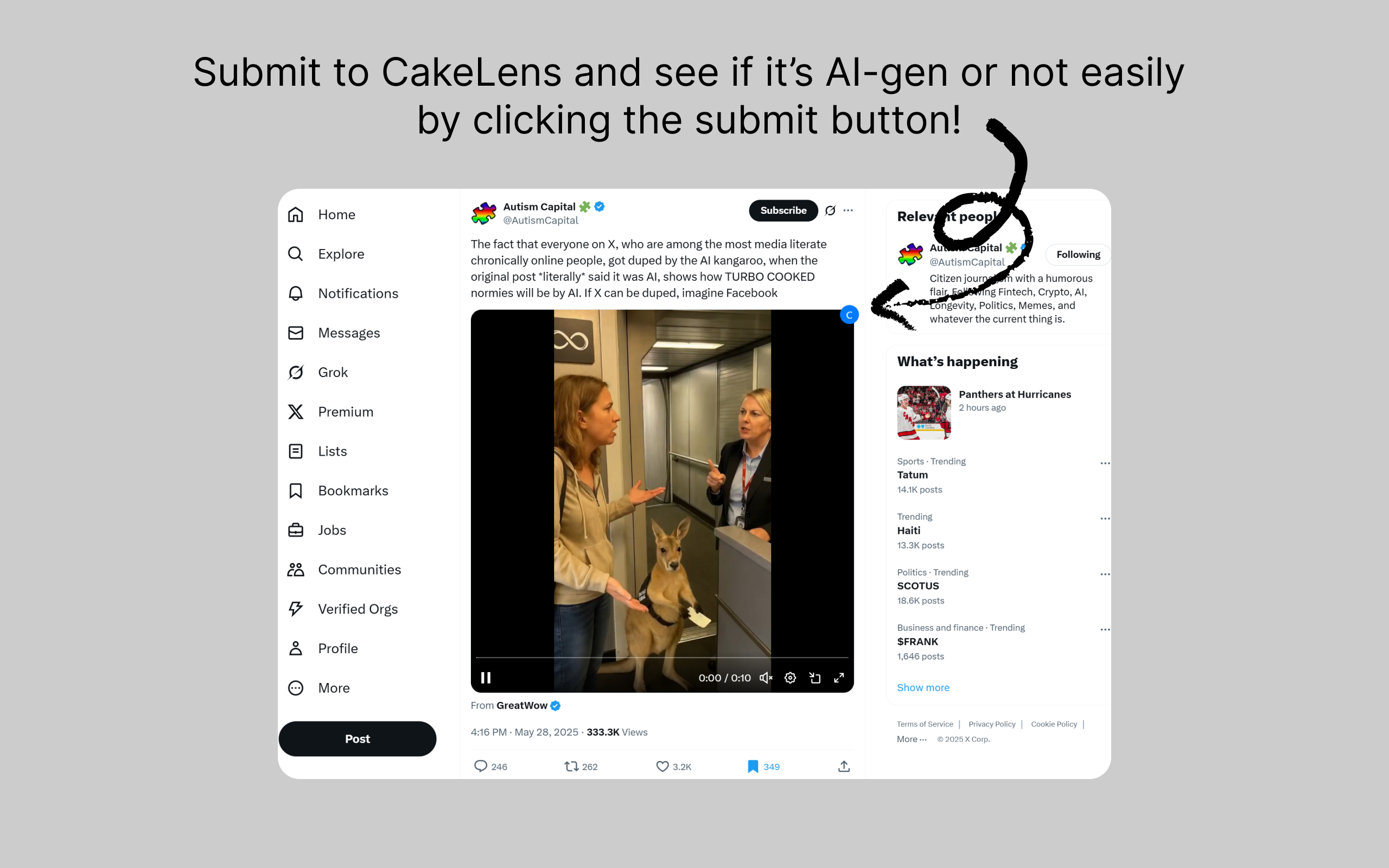

CakeLens is now available on the Chrome Web Store. You need to sign up for an account at CakeLens.ai to use it. Once set up, a button appears in the upper-right corner of videos on X when you hover over them. Click it to submit the video for AI-generated content detection.

Screenshot of X.com showing the CakeLens button on the upper-right corner when hovering on a video

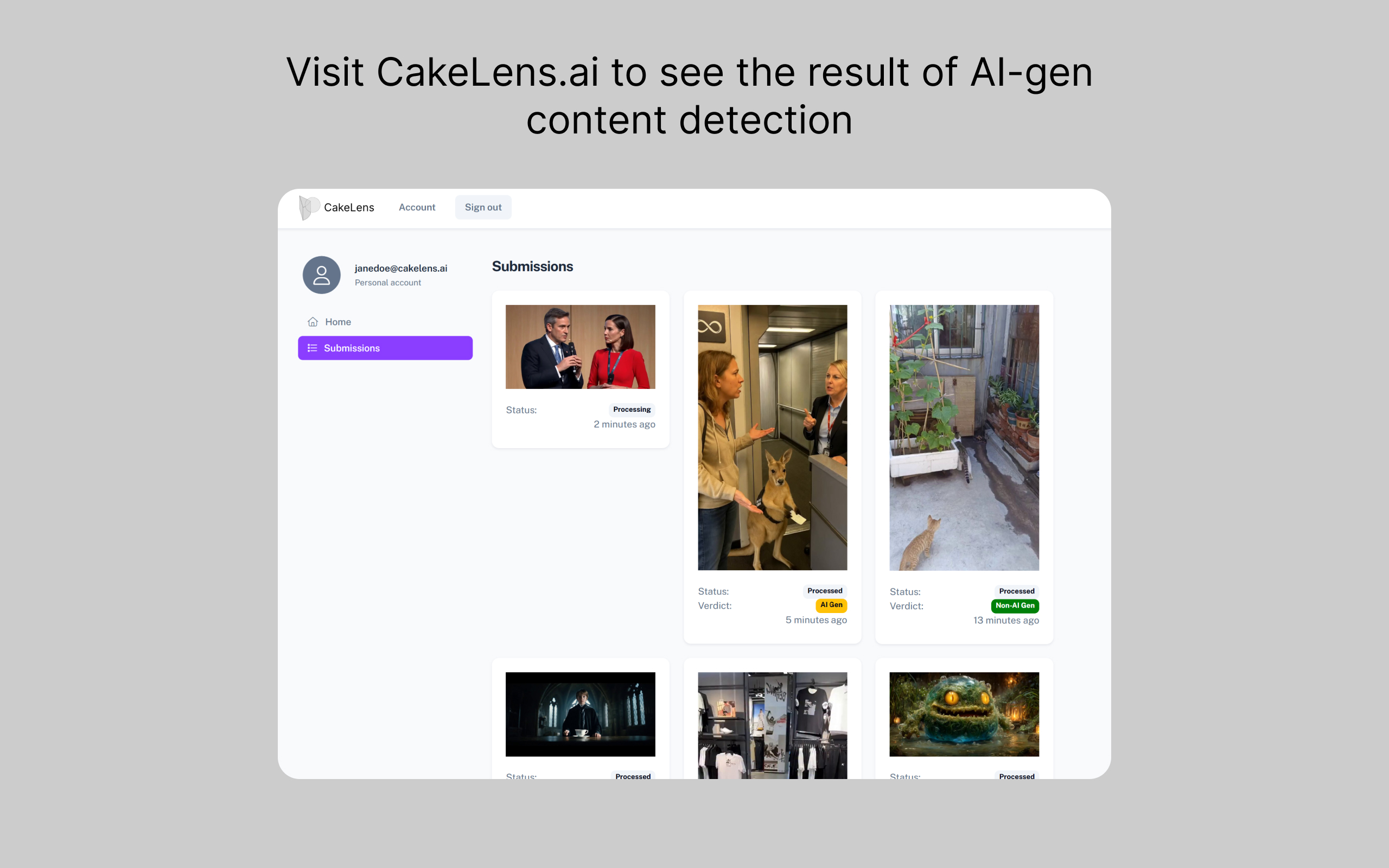

View the detection results on the submissions page of your CakeLens account.

Screenshot of the submission page of CakeLens

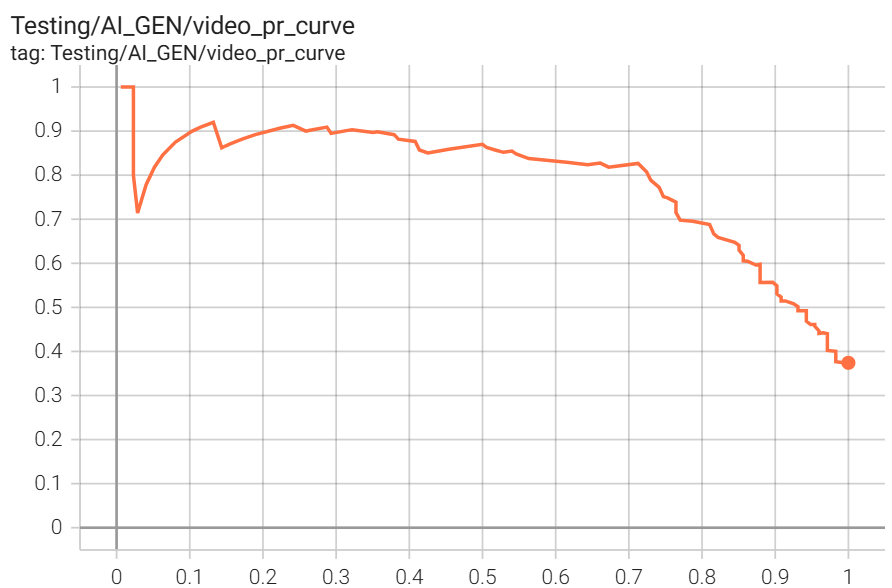

The latest version of my model achieves 77% precision and 74% recall on the validation dataset at 50% as the threshold.

Screenshot of TensorBoard PR curve for CakeLens' latest model

I’ve learned a lot from this project. Today, I’m sharing what I’ve learned from building this pet project!

...

Read the full articleNvidia GPU on bare metal NixOS Kubernetes cluster explained

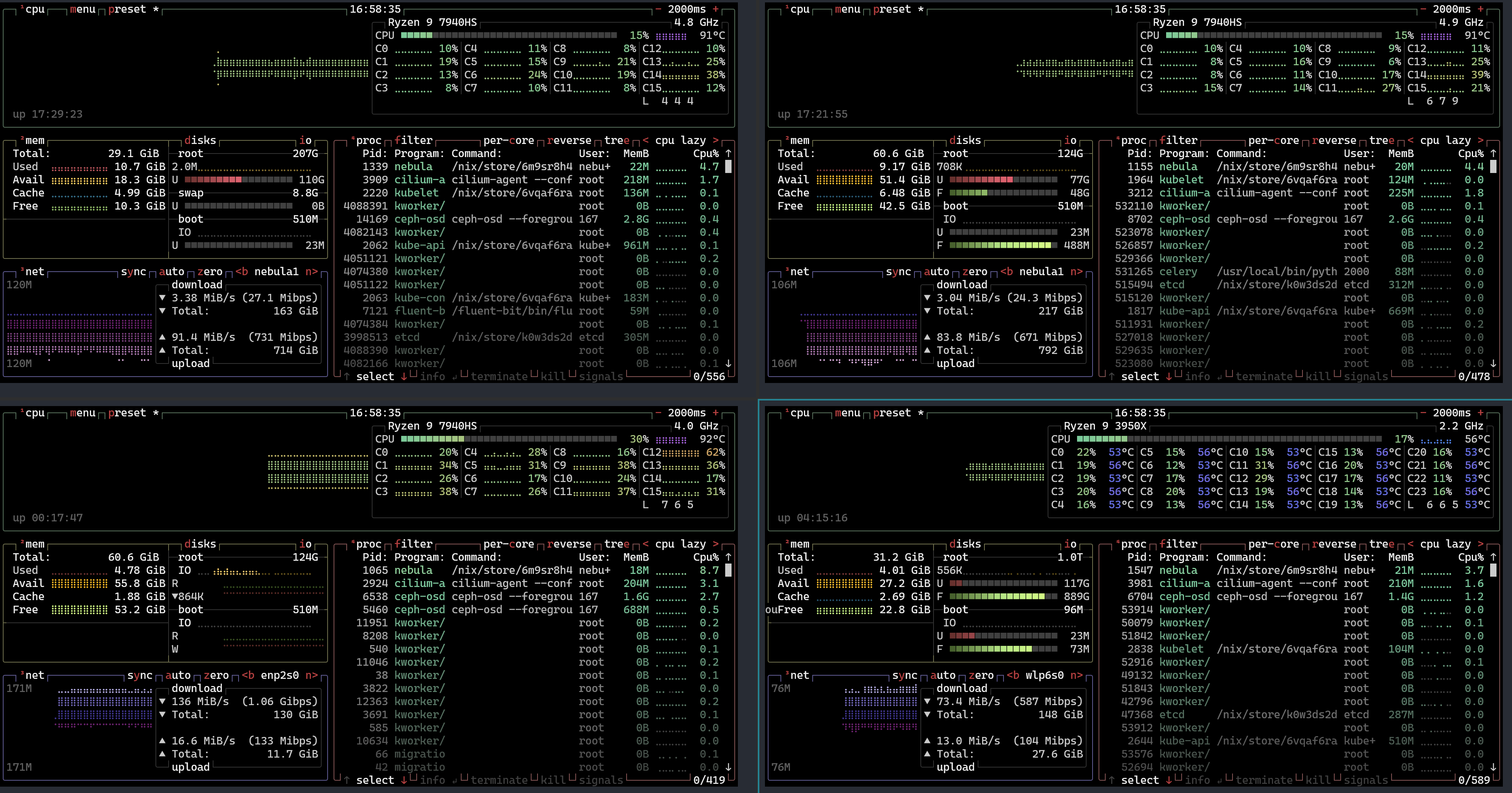

Since the last time I published the second MAZE (Massive Argumented Zonal Environments) article, I realized that the framework is getting more mature, but I need a solution to run it on a large scale. In the past, I built a bare metal Kubernetes cluster on top of a three-node mini PC interconnected with USB4. I have a retired workstation PC with NVIDIA GeForce RTX 2080 Ti. I wondered why not put this PC into the three-node Kubernetes cluster and configure Kubernetes to make CUDA work. Once I have one configured, extending the computing power capacity with more GPUs will be easier.

Screenshot of btop of my four-node Kubernetes cluster, with three mini PCs connected via USB4 and the newly added retired workstation sporting an Nvidia GeForce RTX 2080 Ti.

With that in mind, I took a week on this side quest. It turns out it’s way harder than I expected. I have learned a lot from digging this rabbit hole, and I encountered other holes while digging this one. Getting the Nvidia device plugin up and running in Kubernetes is hard. Not to mention, running on top of NixOS makes it even more challenging. Regardless, I finally managed to get it to work! Seeing it running for the first time was a very joyful moment.

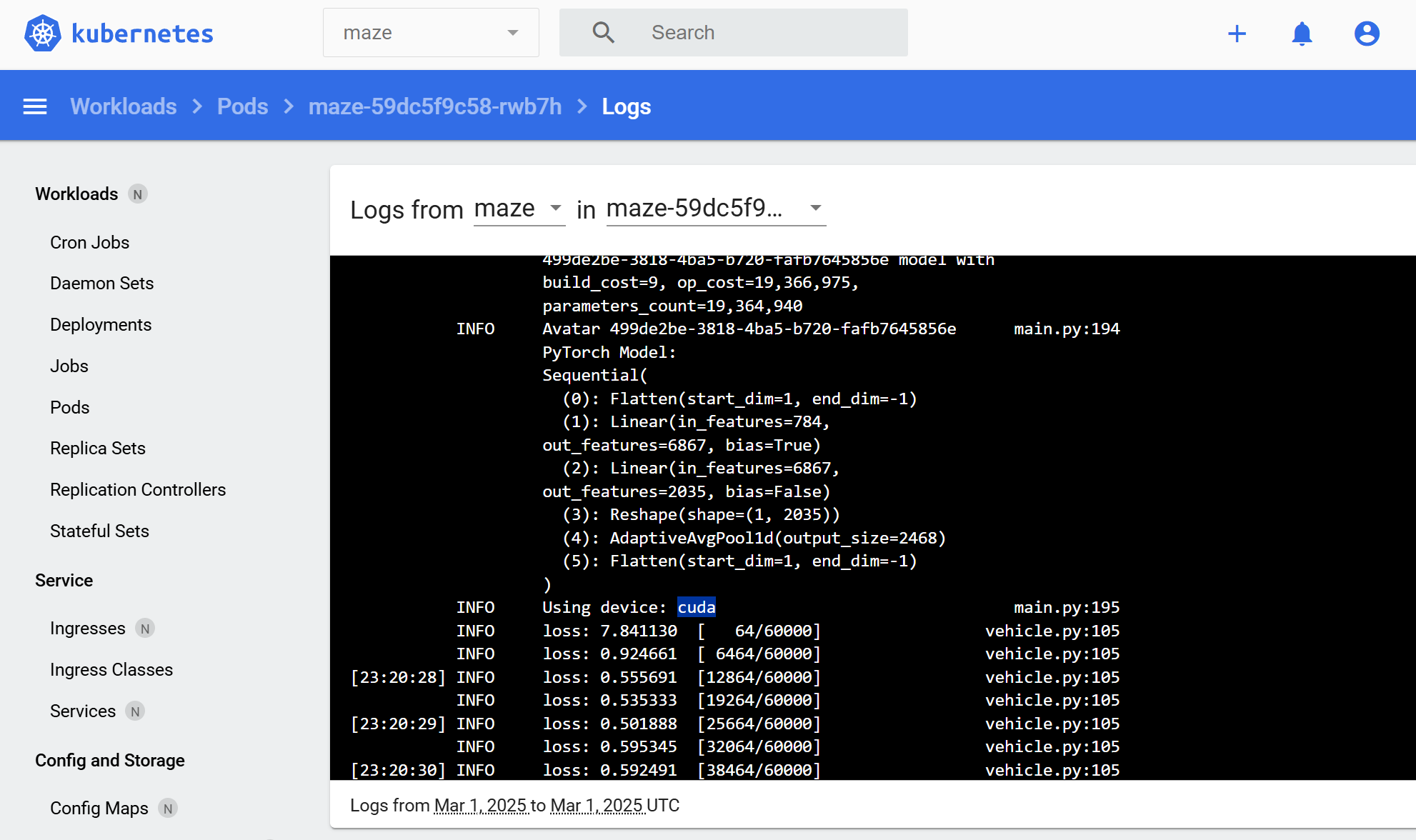

Screenshot of the Kubernetes dashboard shows the logs of a MAZE pod running an experiment on a CUDA device

An article like this could be helpful to someone as more software engineers are getting into machine learning. Running all of it on the cloud is a costly option. There are also privacy concerns for personal use, so Nvidia GPU on a local bare-metal Kubernetes cluster is still a very tempting option. Here, I would like to share my experience setting up a bare metal Kubernetes cluster with the Nvidia GPU CDI plugin enabled in NixOS.

...

Read the full articleMAZE - My AI models are finally evolving!

This is the second article in the MAZE machine learning series. To learn more about what is MAZE (Massive Argumented Zonal Environments), you can read other articles here:

More than one week has passed since I published my previous MAZE article. I spent most of my spare time besides my work on this project, and I have already made significant progress. I can’t believe what I’ve done in the past week with just myself. I am very proud to have achieved this much in such a short time. Today, I want to share the latest update about the MAZE project.

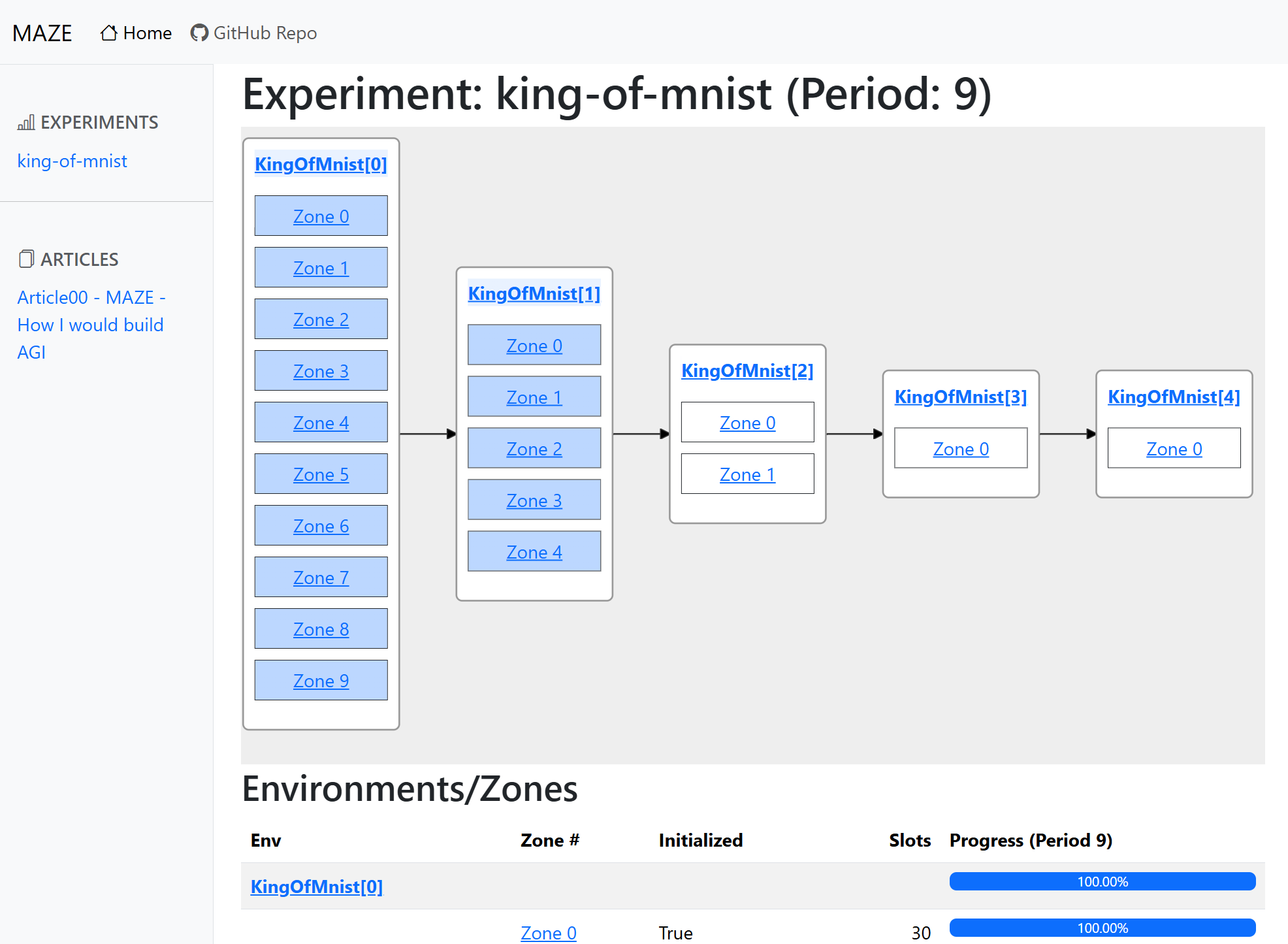

MAZE Web app

First, as promised in the previous article, I wanted to build a UI that made researching much easier and publish the data publicly so that anybody interested in machine learning could view those neuron networks. People can learn a new trick or two or even open new research based on the latest effective patterns found through MAZE. And yes, I deliver! Here are some screenshots from the web app:

Screenshot of MAZE web app experiment page

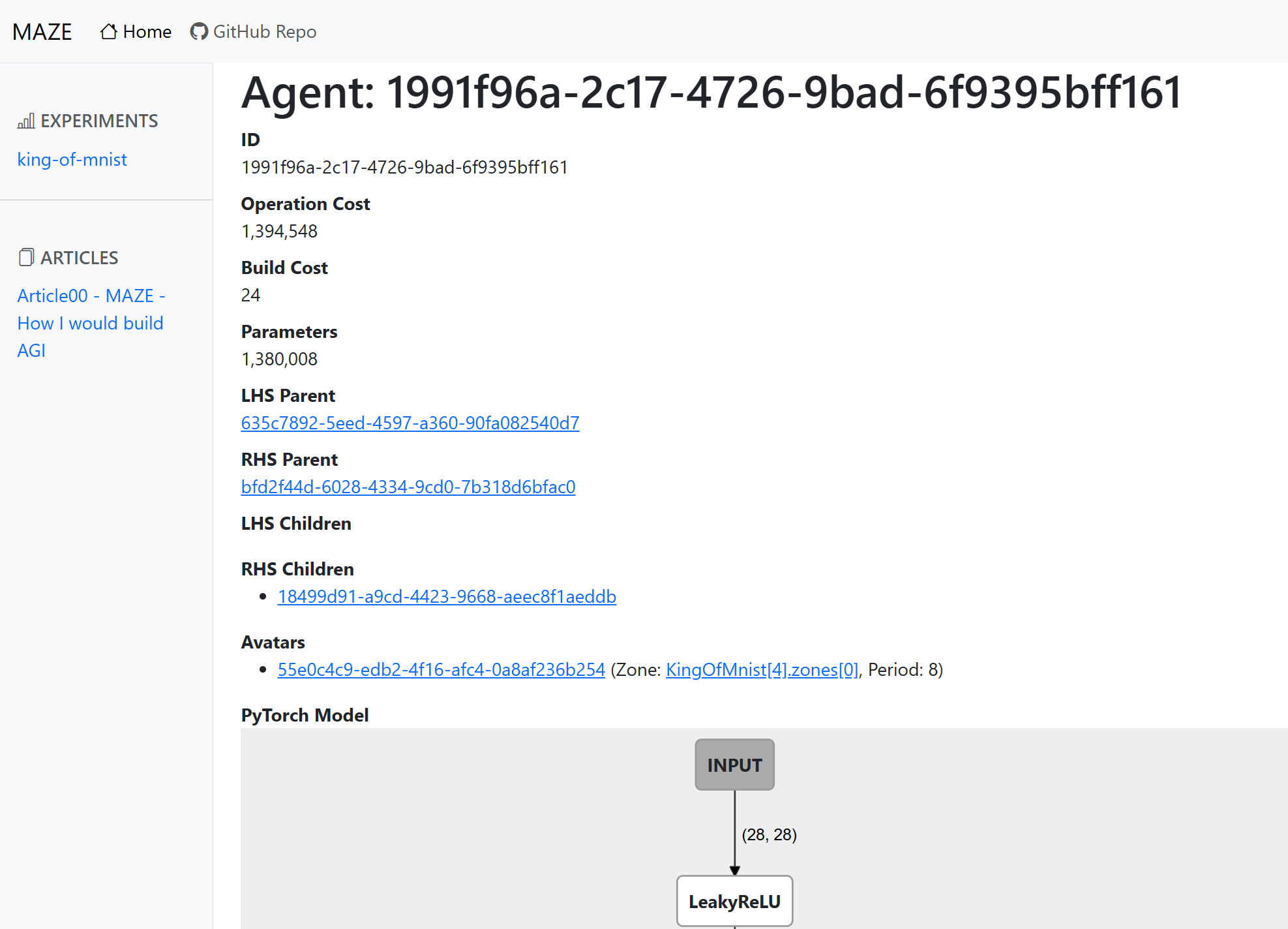

Screenshot of MAZE web app agent page

I published the web app at here, and you can visit yourself to find out:

As you can see, the domain name has article01 as a prefix because I will move at light speed in improving it by making lots of changes, and I don’t want to maintain backward compatibility.

So, this website will be a snapshot of my initial test rounds.

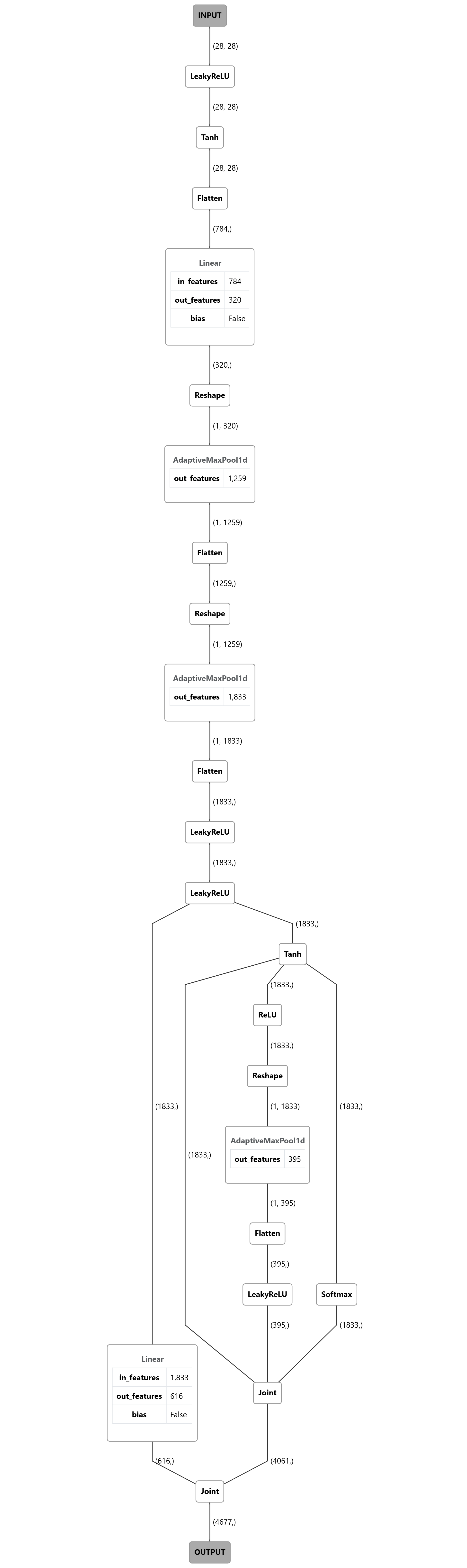

During the development of MAZE, I realized it’s tough to learn how a model works by reading its gene code, so I made it possible to view each model’s PyTorch module DAG (Directed acyclic graph) directly. Here are some really interesting examples, like this complex one with many branches:

DAG diagram of a neuron network with linear and maxpool at the beginning then connect to many branches at the bottom with a few modules before the output node

I will show you more interesting examples in the following sections.

...

Read the full articleMAZE - How I would build AGI

Update: This is the first article in the MAZE machine learning series. You can read other articles here:

I can’t believe I am publishing this. I’ve been thinking about Artificial general intelligence and how to build it for a very long time. Call me crazy if you want, but I have an idea of building it differently from the mainstream approach. Instead of using backpropagation hammering down on smartly handcrafted networks, I want to build a system that can produce arbitrary neuron networks based on evolving and mutating genes in a series of controlled environments. Of course, every cool approach deserves an awesome acronym name. That’s why I named it MAZE (Massive Argumented Zonal Environments) 😎:

MAZE stands for Massive Argumented Zonal Environments

I spent last week building a prototype that can generate random neuron networks based on a gene sequence. Shockingly, I haven’t even implemented the part of generating offspring and gene mutation, but some randomly generated networks I saw during the development have already shown better performance than the ones I crafted manually during the learning process.

...

Read the full article